Digital Human Generation

The video and audio of the digital human are highly synchronized to achieve natural and smooth lip-syncing.

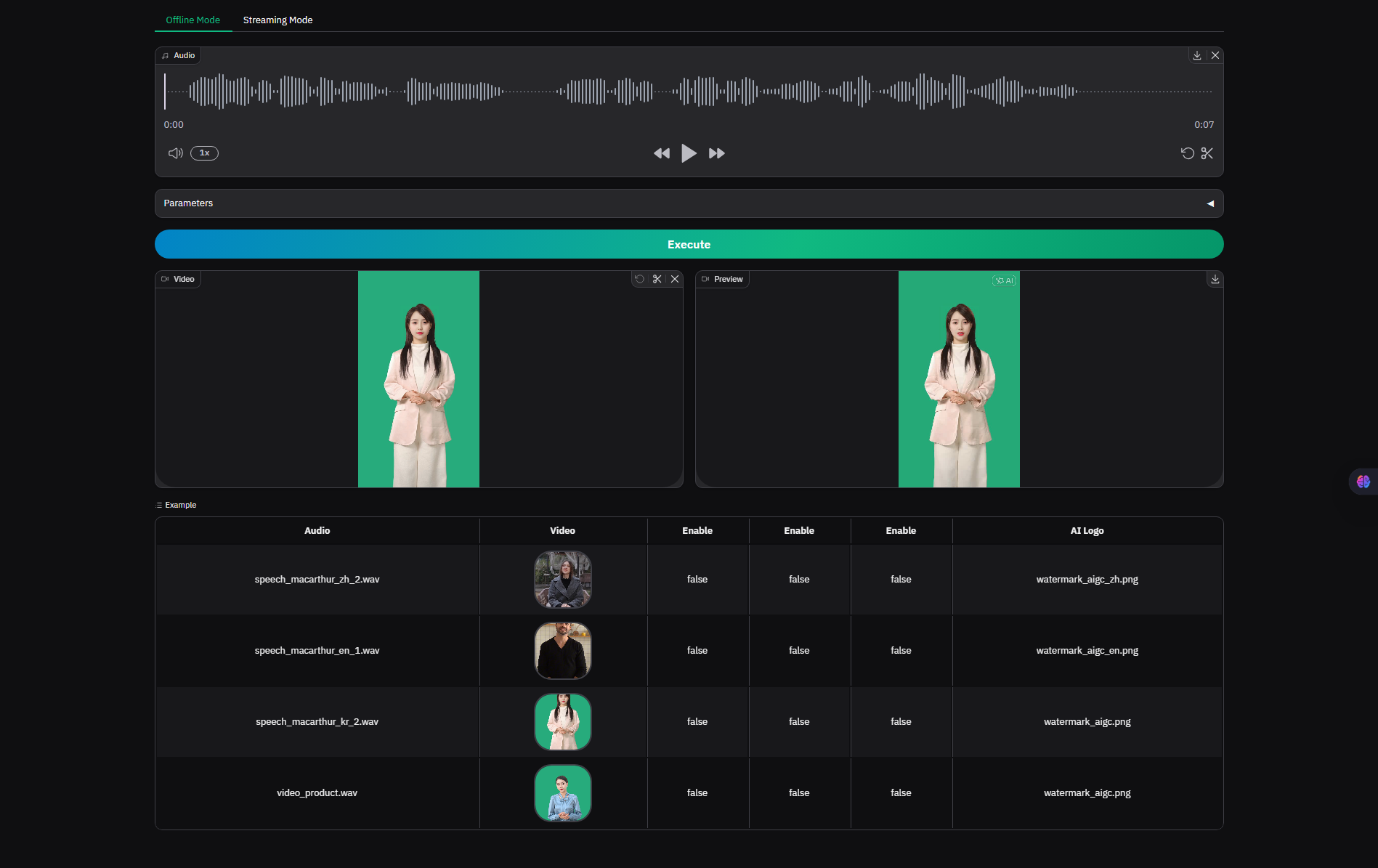

Preview

| Offline | Streaming |

|---|---|

|  |

Example

Offline Mode

Video, audio driver: Support cross-language support, lip-syncing, watermarking, super-resolution, and loop playback.

| Original 1080P | Chinese | English | Japanese | Korean |

|---|---|---|---|---|

| Original 480P | Chinese | English | Japanese | Korean |

|---|---|---|---|---|

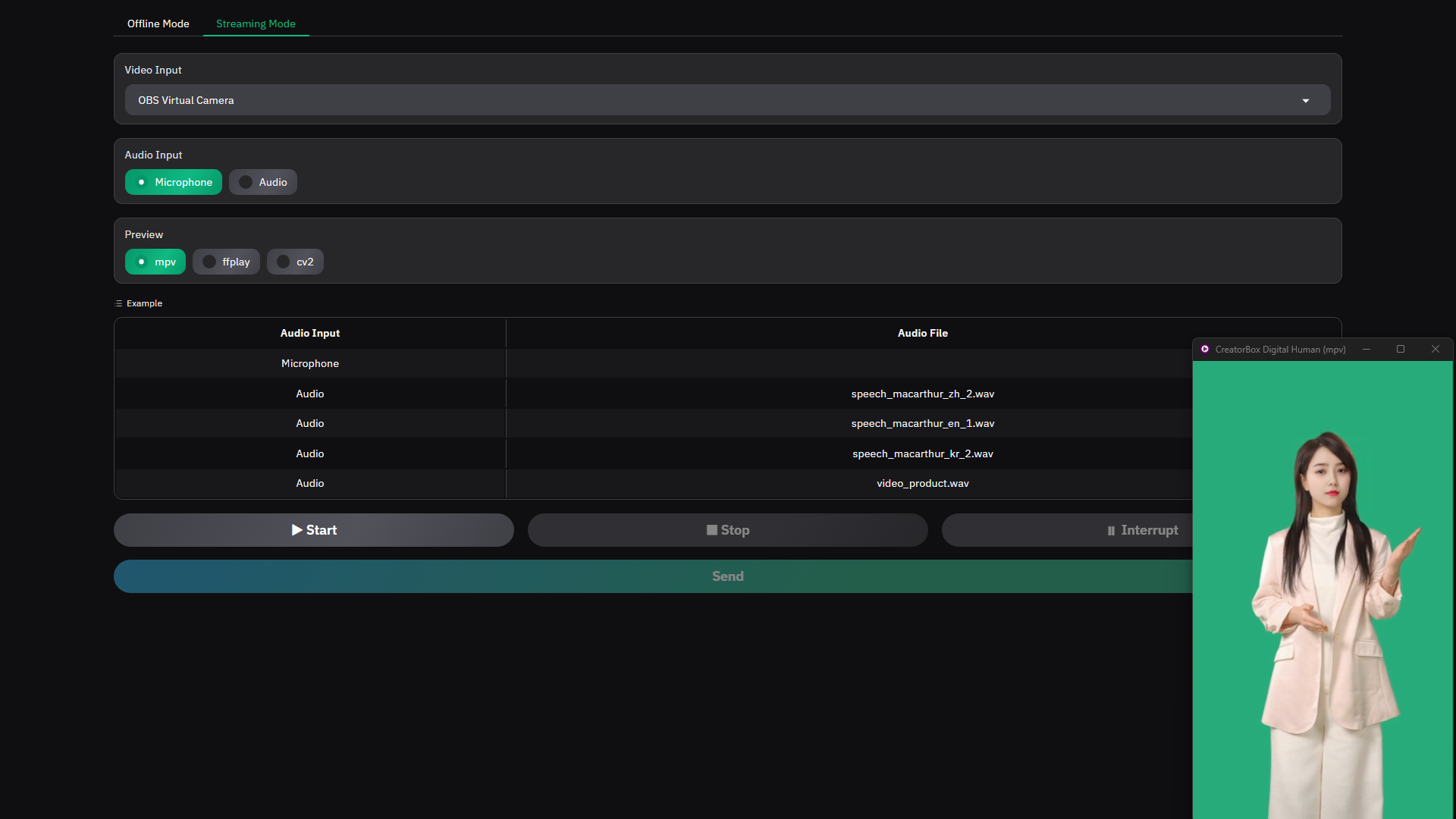

Streaming Mode

Camera, microphone driver: Supports cross-language communication, lip-syncing, real-time interruption, real-time insertion, and real-time switching.

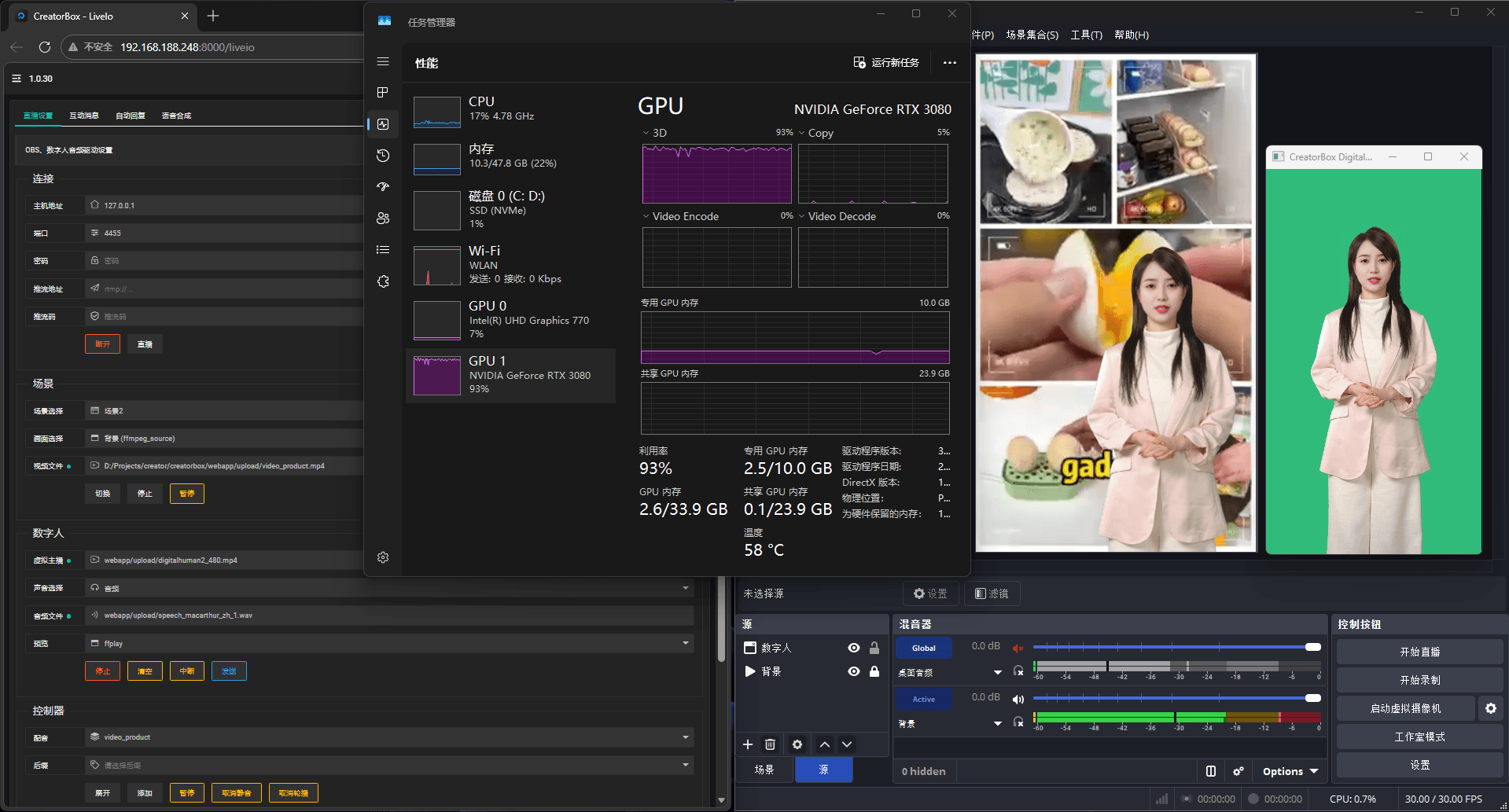

Extension

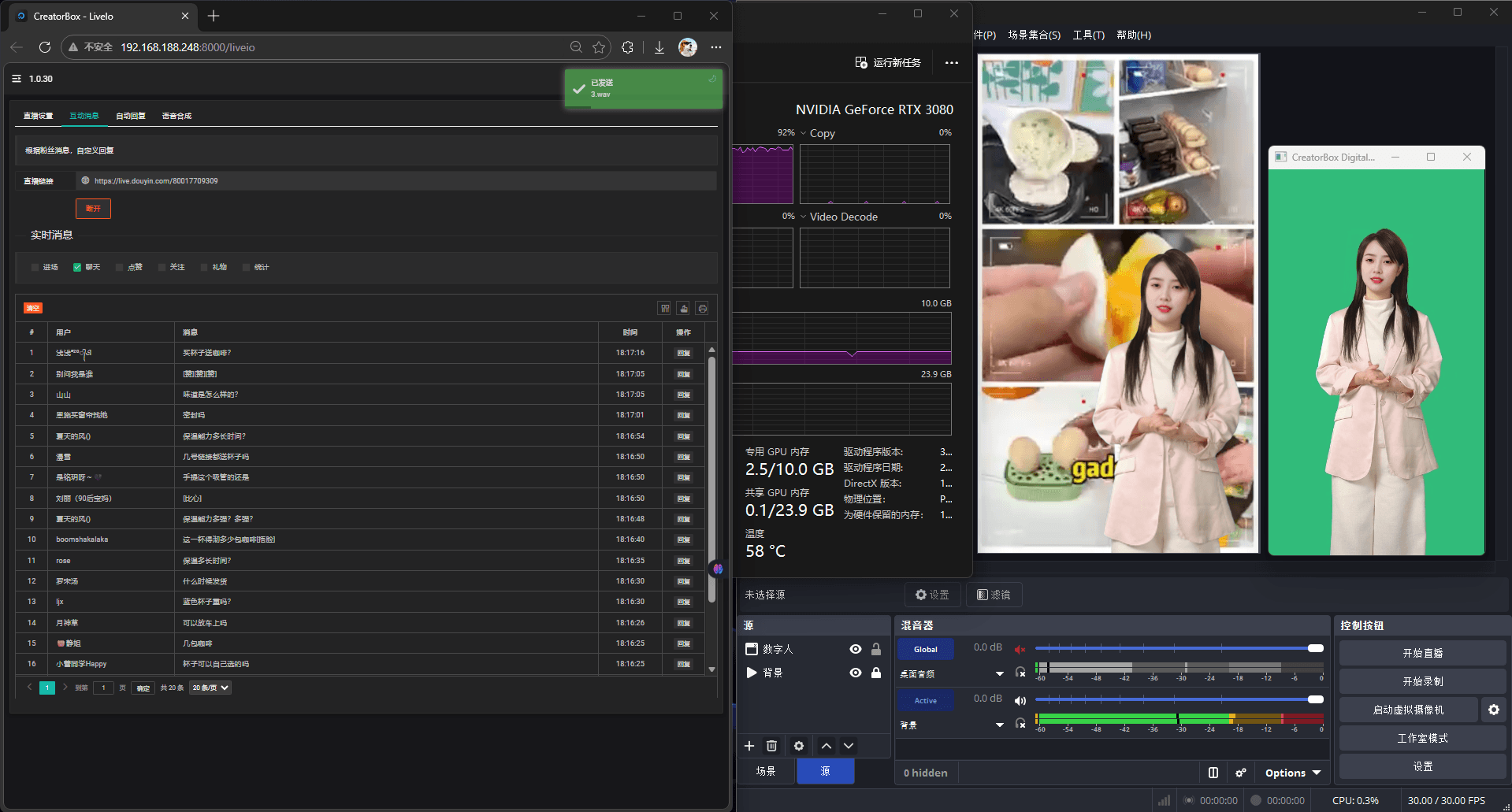

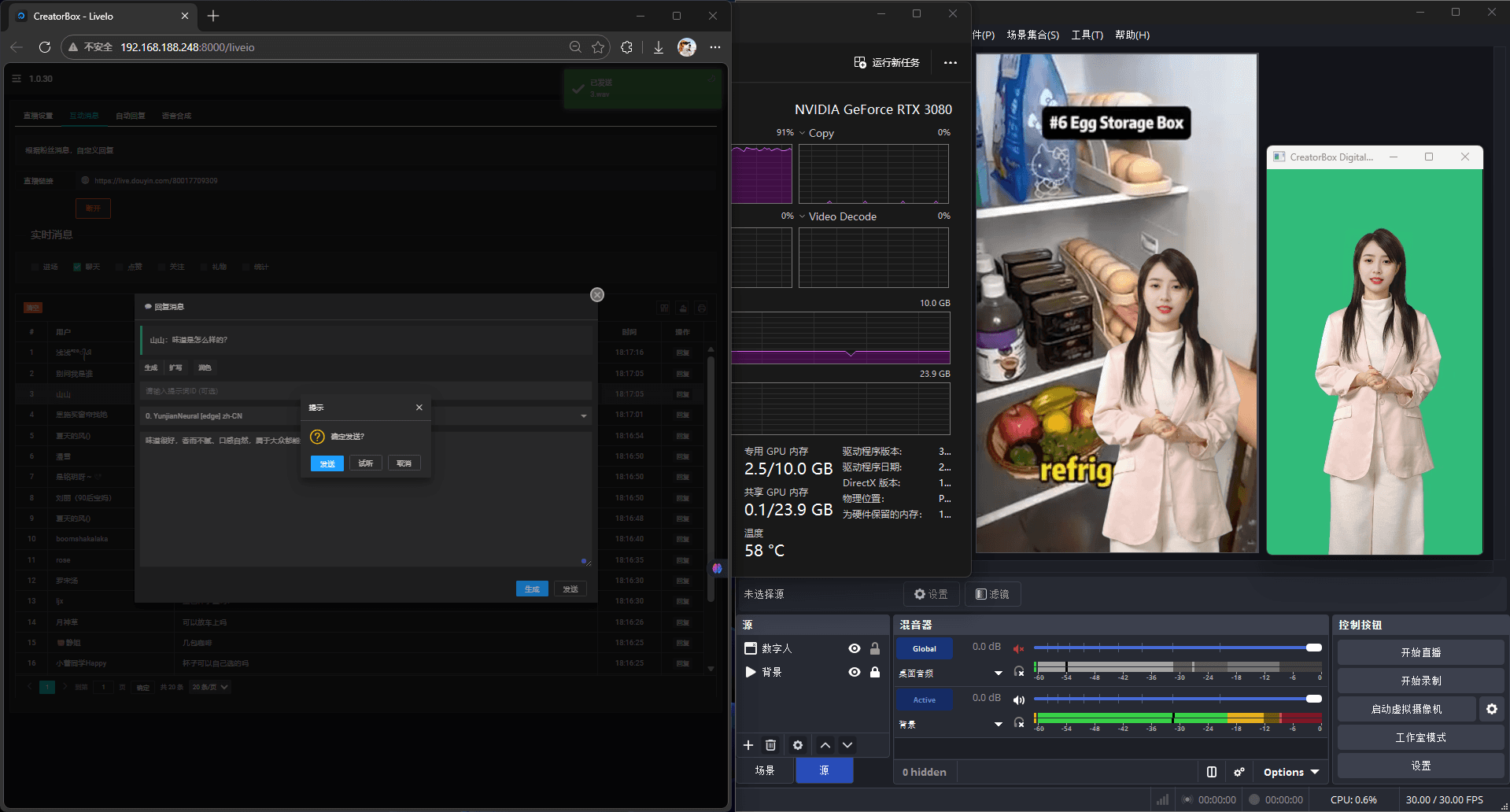

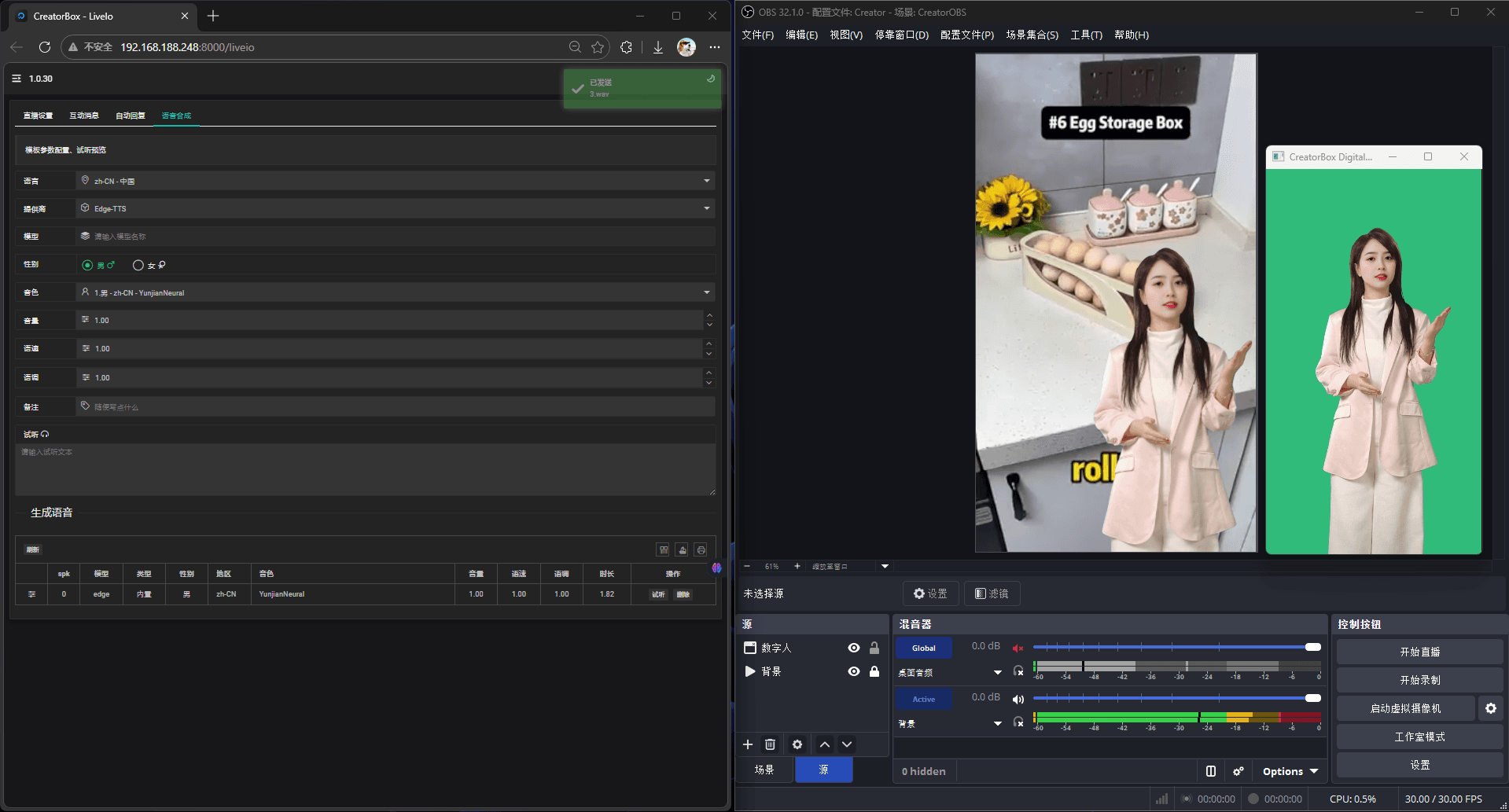

AI Digital Human Assisted Live Streaming provides real-time interaction through digital human live streaming messages, offering live stream settings, real-time message replies, voice template cloning, and other functions to help streamers easily create high-quality live stream content.

| Live Streaming Settings | Real-time Messaging | Message Reply | Voice Templates | Real-time integration new |

|---|---|---|---|---|

|  |  |  |  |

📅 Planned Support

💡 Preview Modes new

The streaming mode supports four preview methods, which can be selected according to actual usage scenarios:

| Mode | Description | Video Source Limitation |

|---|---|---|

obs | Push directly to OBS Virtual Camera, no display window | Video only |

cv2 | Local window preview (Default) | Camera / Video |

ffplay | Window preview using ffplay (FFmpeg required) | Camera / Video |

flow

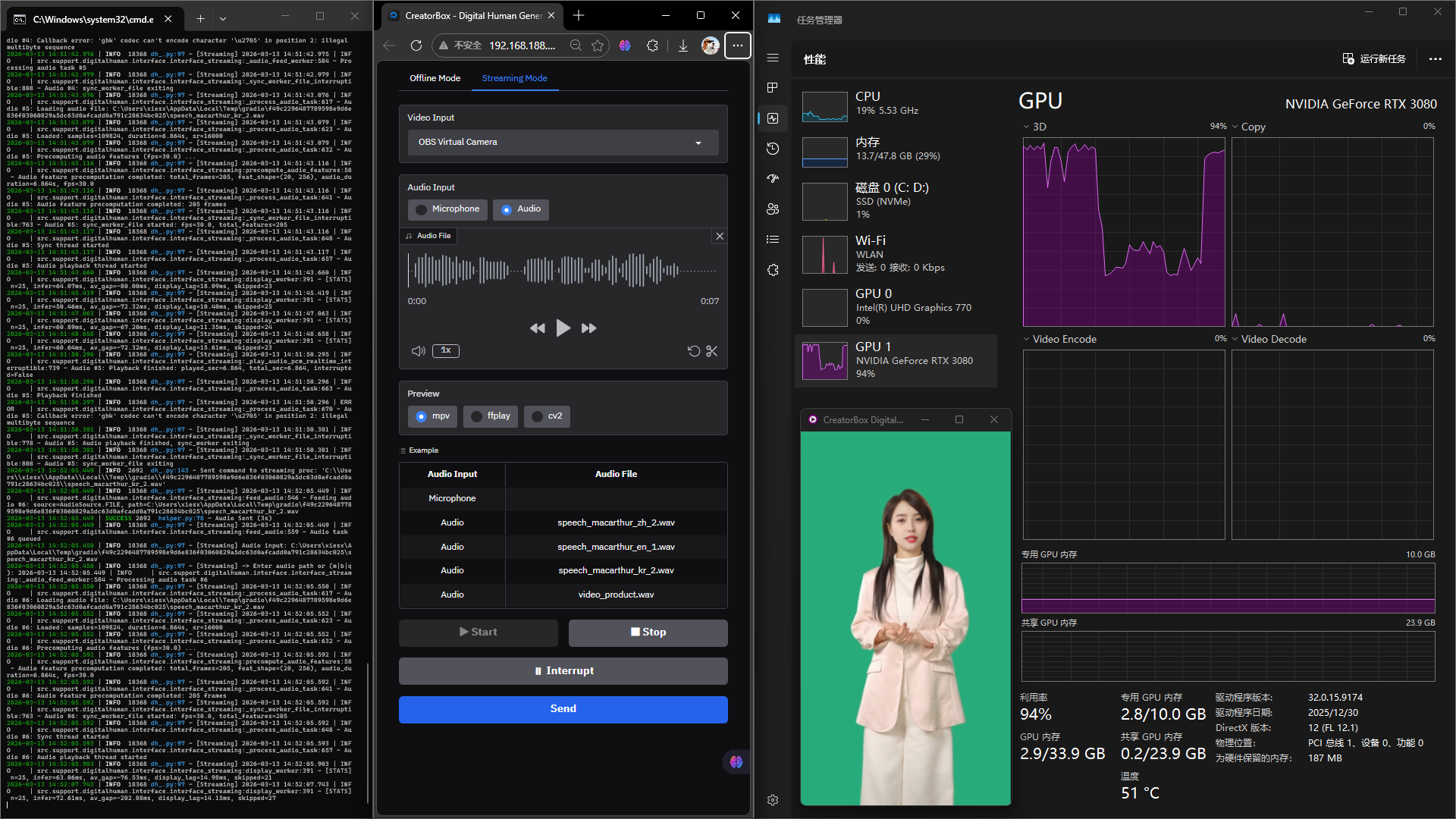

⚡ Performance Reference new

The following are 30 frames of real-time digital human inference log snippets for reference only. Actual performance may vary due to factors such as device configuration.

[DH] -> Enter audio path or (!path|!m|b|c|q): Audio input: D:\Projects\creator\creator-box\webapp\upload\video_product.wav [Interrupt]

[DH] 2026-05-11 14:17:39.189 | INFO 1364 interface_streaming_v2.py:579 - Feeding audio #0: source=AudioSource.FILE, path=D:\Projects\creator\creator-box\webapp\upload\video_product.wav, interrupt=True

[DH] 2026-05-11 14:17:39.189 | INFO 1364 interface_streaming_v2.py:594 - Audio task #0 set as priority (interrupt mode), pending queue untouched

[DH] -> Enter audio path or (!path|!m|b|c|q): 2026-05-11 14:17:39.365 | INFO 1332 interface_streaming_v2.py:635 - Processing audio task #0 (interrupt=True)

[DH] 2026-05-11 14:17:39.365 | INFO 1332 interface_streaming_v2.py:672 - Audio #0: Loading audio file: D:\Projects\creator\creator-box\webapp\upload\video_product.wav

[DH] 2026-05-11 14:17:40.782 | INFO 1332 interface_streaming_v2.py:678 - Audio #0: Loaded: samples=489291, duration=30.581s, sr=16000

[DH] 2026-05-11 14:17:40.782 | INFO 1332 interface_streaming_v2.py:687 - Audio #0: Precomputing audio features (fps=30.0) ...

[DH] 2026-05-11 14:17:41.012 | INFO 1332 features.py:44 - Wenet features cached: key=wenet_feat:e31898fd38810..., shape=(784, 256)

[DH] 2026-05-11 14:17:41.014 | INFO 1332 features.py:62 - Audio features precomputation completed: total_frames=917, feat_shape=(20, 256), audio_duration=30.581s, fps=30.0

[DH] 2026-05-11 14:17:41.014 | INFO 1332 interface_streaming_v2.py:696 - Audio #0: Audio feature precomputation completed: 917 frames

[DH] 2026-05-11 14:17:41.015 | INFO 1332 interface_streaming_v2.py:713 - Audio #0: Sync thread started

[DH] 2026-05-11 14:17:41.015 | INFO 1660 interface_streaming_v2.py:837 - Audio #0: sync_worker_file started: fps=30.0, total_features=917

[DH] 2026-05-11 14:17:41.018 | INFO 1332 interface_streaming_v2.py:722 - Audio #0: Audio playback thread started

[DH] 2026-05-11 14:17:42.029 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=29.74ms, av_gap=-75.33ms, display=0.15ms, skipped=0

[DH] 2026-05-11 14:17:43.053 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.69ms, av_gap=12.22ms, display=0.25ms, skipped=1

[DH] 2026-05-11 14:17:44.080 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.76ms, av_gap=7.11ms, display=0.03ms, skipped=1

[DH] 2026-05-11 14:17:45.101 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.94ms, av_gap=8.67ms, display=0.08ms, skipped=0

[DH] 2026-05-11 14:17:46.132 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.81ms, av_gap=12.22ms, display=0.13ms, skipped=1

[DH] 2026-05-11 14:17:47.156 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.02ms, av_gap=11.33ms, display=0.10ms, skipped=1

[DH] 2026-05-11 14:17:48.177 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.23ms, av_gap=7.33ms, display=0.03ms, skipped=1

[DH] 2026-05-11 14:17:49.197 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.00ms, av_gap=8.67ms, display=0.02ms, skipped=0

[DH] 2026-05-11 14:17:50.229 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.90ms, av_gap=10.22ms, display=0.28ms, skipped=1

[DH] 2026-05-11 14:17:51.251 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.60ms, av_gap=11.56ms, display=0.02ms, skipped=1

[DH] 2026-05-11 14:17:52.283 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.82ms, av_gap=9.11ms, display=0.57ms, skipped=1

[DH] 2026-05-11 14:17:53.306 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.36ms, av_gap=7.33ms, display=0.49ms, skipped=0

[DH] 2026-05-11 14:17:54.327 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.02ms, av_gap=10.89ms, display=0.52ms, skipped=1

[DH] 2026-05-11 14:17:55.353 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.79ms, av_gap=9.33ms, display=0.05ms, skipped=1

[DH] 2026-05-11 14:17:56.384 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.94ms, av_gap=9.33ms, display=0.49ms, skipped=1

[DH] 2026-05-11 14:17:57.409 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=22.16ms, av_gap=4.44ms, display=0.49ms, skipped=1

[DH] 2026-05-11 14:17:58.430 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.05ms, av_gap=14.00ms, display=0.62ms, skipped=0

[DH] 2026-05-11 14:17:59.464 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.89ms, av_gap=11.33ms, display=0.56ms, skipped=1

[DH] 2026-05-11 14:18:00.492 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.17ms, av_gap=6.89ms, display=0.03ms, skipped=1

[DH] 2026-05-11 14:18:01.517 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.91ms, av_gap=9.78ms, display=0.57ms, skipped=1

[DH] 2026-05-11 14:18:02.544 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.99ms, av_gap=7.11ms, display=0.53ms, skipped=1

[DH] 2026-05-11 14:18:03.576 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.38ms, av_gap=11.33ms, display=0.64ms, skipped=1

[DH] 2026-05-11 14:18:04.607 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.24ms, av_gap=7.78ms, display=0.72ms, skipped=1

[DH] 2026-05-11 14:18:05.634 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.55ms, av_gap=12.67ms, display=0.62ms, skipped=0

[DH] 2026-05-11 14:18:06.666 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.82ms, av_gap=10.00ms, display=0.57ms, skipped=1

[DH] 2026-05-11 14:18:07.695 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=19.97ms, av_gap=12.22ms, display=0.07ms, skipped=1

[DH] 2026-05-11 14:18:08.720 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.54ms, av_gap=10.00ms, display=0.45ms, skipped=1

[DH] 2026-05-11 14:18:09.752 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=21.15ms, av_gap=9.78ms, display=0.57ms, skipped=1

[DH] 2026-05-11 14:18:10.775 | INFO 2164 interface_streaming_v2.py:414 - [STATS] phase=steady, n=30, infer=20.40ms, av_gap=7.78ms, display=0.62ms, skipped=1

[DH] 2026-05-11 14:18:11.899 | INFO 7332 interface_streaming_v2.py:814 - Audio #0: Playback finished: played_sec=30.581, total_sec=30.581, interrupted=False

[DH] 2026-05-11 14:18:11.899 | INFO 1332 interface_streaming_v2.py:728 - Audio #0: Playback finished

[DH] [OK] Audio #0 playback completed

[DH] [COMPLETE] id=0 source=AudioSource.FILE path=D:\Projects\creator\creator-box\webapp\upload\video_product.wav interrupt=TrueMetrics

phaseindicates the current phase (startup phase or stable phase)nindicates the number of frames processed per second (fixed at 30 frames)inferindicates the inference time per frame (the smaller the better)av_gapindicates the audio-video synchronization gap; positive values indicate the video frame time leads the audio (video "ahead"), negative values indicate the video lags the audio (video "behind")skippedindicates the number of frames skipped (if inference time is too long to output on time, some frames will be skipped to maintain synchronization)

🎥 Video tutorial

Note

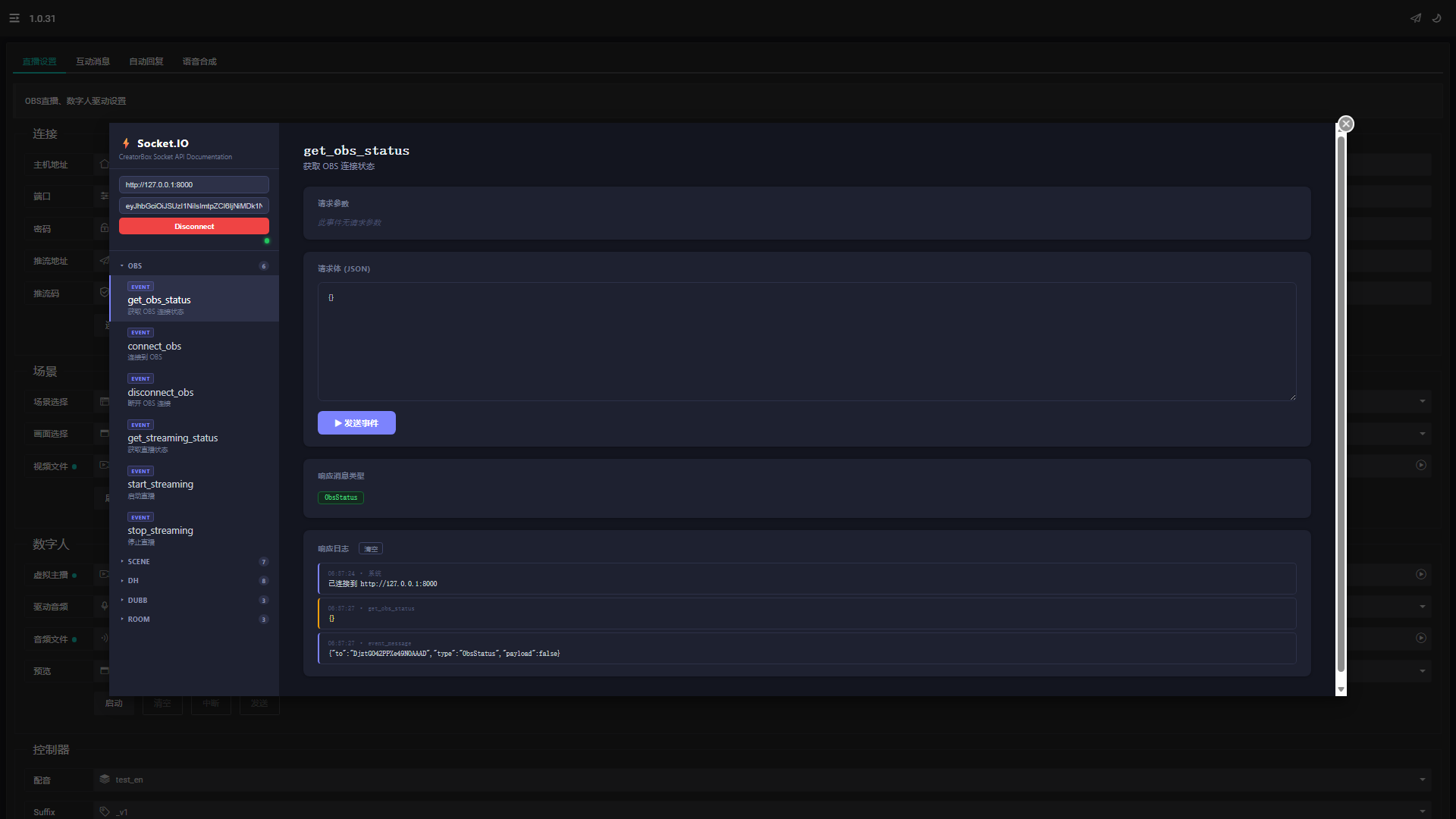

liveiorequires preprocessing of audio and video for the director and does not currently support real-time text generation. However, theSocketIO APIcan be used for secondary development and extension.- When using

real-time mode, it is recommended to disable unusedcomponentsto reduce inference time and improve performance. - To use the Microsoft Edge browser, you need to enable the

edge://settings/content/mediaAutoplaysetting. - Do not use this feature to process illegal or non-compliant content, and it is prohibited to infringe on others' privacy, copyright and other legitimate rights and interests.

- The content above is for demonstration purposes only. Some examples are sourced from the internet. If any content infringes on your rights, please contact us to request its removal.